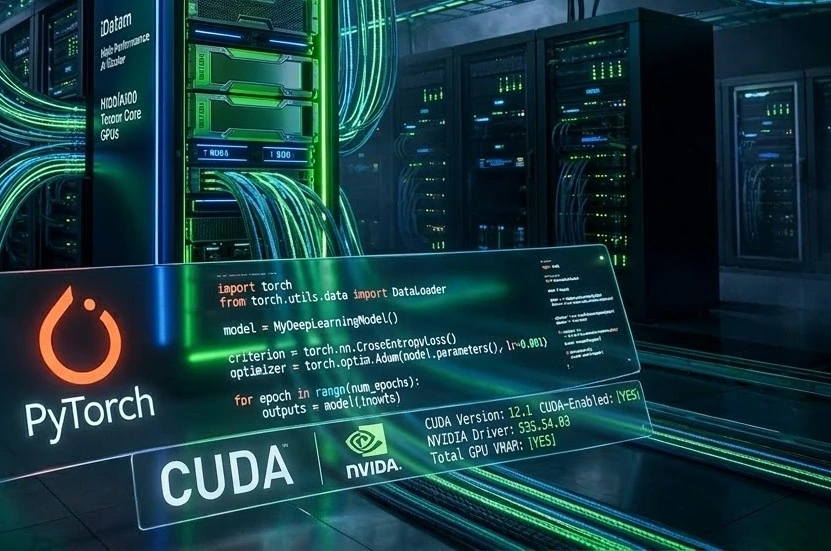

Provisioning the hardware is only the first step in training Large Language Models (LLMs) or complex neural networks. To actually utilize those expensive Tensor Cores, you need a perfectly configured software stack. Misconfigurations between your NVIDIA drivers, the CUDA toolkit, and PyTorch are the #1 cause of kernel panics and GPU starvation.

In this tutorial, we will walk you through the exact terminal commands to transform a fresh Ubuntu Linux server into a production-ready Deep Learning environment.

Running this on an iDatam GPU Dedicated Server ensures zero virtualization overhead during your model compilation, allowing your datasets to flow directly from our NVMe arrays to your GPU's VRAM without a hypervisor slowing you down.

What You'll Learn

Update your Ubuntu system and prepare it for proprietary drivers

Identify and install the correct NVIDIA GPU drivers via the command line

Install the NVIDIA CUDA Toolkit for custom C++ extension compiling

Set up an isolated Python virtual environment

Install PyTorch with GPU support and verify that it can successfully communicate with your hardware

Step 1: Update the System and Install Prerequisites

Prepare Ubuntu Environment

-

System Readiness

Before installing any deep learning libraries, you must ensure your Ubuntu server (22.04 LTS or 24.04 LTS) is fully updated and has the necessary build tools.

Update and Install Tools

-

Run Update Commands

Connect to your server via SSH and run the following commands to refresh repositories and install dependencies:

bashsudo apt update && sudo apt upgrade -y sudo apt install build-essential python3-dev python3-pip python3-venv software-properties-common -y

Step 2: Install NVIDIA Proprietary Drivers

Driver Configuration

-

Avoid Open-Source Drivers

Open-source drivers (like nouveau) cannot be used for deep learning. You must install the official NVIDIA proprietary drivers.

Installation Process

-

Identify and Install Recommended Drivers

bash# Check recommended drivers ubuntu-drivers devices # Auto-install the driver sudo ubuntu-drivers autoinstall # Reboot to apply changes sudo reboot # Verify after reboot nvidia-sminvidia-smishould display your GPU model and VRAM usage.

Step 3: Install NVIDIA CUDA Toolkit

System-Wide CUDA

-

Why install CUDA?

Installing the system-wide CUDA toolkit is required if you plan on compiling custom CUDA extensions or using frameworks like DeepSpeed.

Installation Steps

-

Install from Repositories

bashsudo apt install nvidia-cuda-toolkit -y nvcc --version

Step 4: Set Up Isolated Python Environment

Virtual Environments

-

Best Practices

Never install PyTorch globally. Always use a virtual environment to prevent dependency conflicts.

Setup Workspace

-

Create and Activate

bashmkdir ~/ai-workspace cd ~/ai-workspace python3 -m venv pytorch-env source pytorch-env/bin/activatesource pytorch-env/bin/activatewill change your terminal prompt.

Step 5: Install PyTorch with CUDA Support

PIP Installation

-

PyTorch Binaries

We will use the official PyTorch PIP index to ensure we get the version compiled with CUDA 12.1.

Run Installation

-

Install Libraries

bashpip install torch torchvision torchaudio

Step 6: Verify the Deep Learning Stack

Hardware Communication

-

Check GPU Accessibility

The final step is to prove that PyTorch can successfully "talk" to your NVIDIA GPU.

Python Test Script

-

Run Verification Code

pythonimport torch # Check if CUDA is available print("CUDA Available: ", torch.cuda.is_available()) # Get the name of the GPU if torch.cuda.is_available(): print("GPU Model: ", torch.cuda.get_device_name(0))Expected output:

CUDA Available: True

Next Steps: Scaling Your AI Infrastructure

You now have a production-ready deep learning environment. However, as your datasets grow from gigabytes to terabytes, a single GPU might not be enough.

When you are ready to scale to multi-node distributed training, iDatam provides unmetered 100Gbps Dedicated Servers to ensure your AI cluster never suffers from network bottlenecks or GPU starvation.

iDatam Recommended Tutorials

Control Panel

How to Fix Invalid cPanel License Error?

Find out how to fix the Invalid cPanel License error with this step-by-step guide. Resolve licensing issues quickly and get your hosting control panel back on track.

Control Panel

How to Install and Use JetBackup in cPanel

Learn how to install and use JetBackup in cPanel with this step-by-step tutorial. Discover how to back up and restore accounts, files, databases, and more efficiently.

Network

Remote Desktop Can’t Connect To The Remote Computer [Solved]

Learn how to fix the Remote Desktop can't connect to the remote computer error. Discover common causes such as network problems, Windows updates, and firewall restrictions, along with step-by-step solutions to resolve the issue and restore your remote desktop connection.

Discover iDatam Dedicated Server Locations

iDatam servers are available around the world, providing diverse options for hosting websites. Each region offers unique advantages, making it easier to choose a location that best suits your specific hosting needs.