For enterprise databases, virtualization platforms (like Proxmox), and massive AI datasets, a single point of failure is unacceptable. If a physical drive dies and takes your database offline, your business stops.

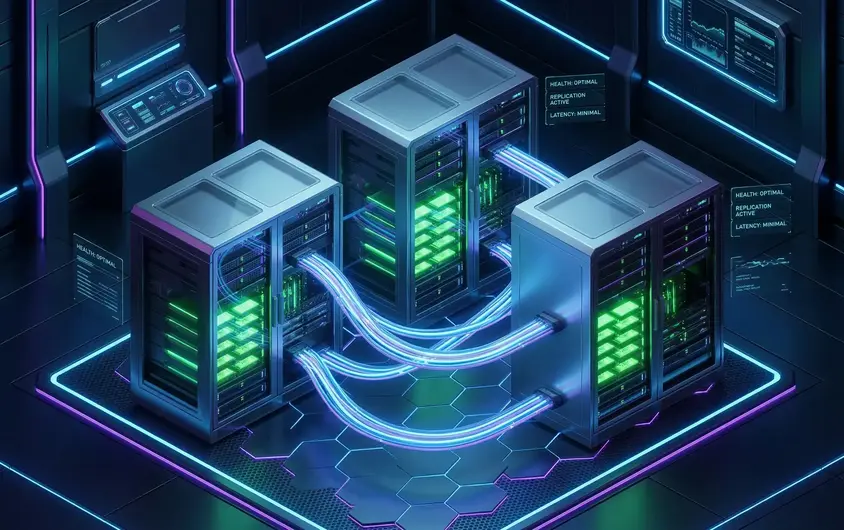

The industry standard for unbreakable, scalable, and hyper-fast redundancy is Ceph. Ceph is a distributed storage platform that replicates your data across multiple physical servers. If a drive—or an entire server—goes up in smoke, the cluster heals itself automatically with zero downtime.

However, because Ceph constantly replicates data across the network, deploying it on slow SATA drives or 1Gbps networks results in crippling latency. In this tutorial, we will show DevOps engineers how to link three iDatam bare-metal servers to create an ultra-fast High-Availability (HA) Ceph cluster.

By running this on our Storage Dedicated Servers equipped with PCIe Gen 5 NVMe drives and unmetered 100Gbps internal network uplinks, you guarantee that your data replication happens at the speed of RAM.

What You'll Learn

The architecture of a minimal HA Ceph cluster (Monitors, Managers, and OSDs).

How to configure internal network hostnames across three Ubuntu servers.

How to use the modern cephadm utility to bootstrap your primary cluster server.

How to add worker servers to the cluster using SSH orchestration.

How to provision raw NVMe drives as Object Storage Daemons (OSDs) for hyper-fast data replication.

Prerequisites

To build a true High-Availability cluster, you need:

Three (3) Bare-Metal Servers: We will name them

ceph-server1,ceph-server2, andceph-server3.Two Network Interfaces per Server: One for public internet access, and a high-speed (10Gbps or 100Gbps) backend private network for cluster replication.

Empty NVMe Drives: At least one raw, unformatted NVMe drive on each server (e.g.,

/dev/nvme1n1) dedicated purely to Ceph.Ubuntu 22.04 LTS or 24.04 LTS installed on the primary OS drive.

Step 1: Configure Hostnames and Internal Networking

Ceph relies heavily on hostname resolution across the internal network. Connect to all three servers and update their /etc/hosts files.

On all three servers, edit the hosts file:

sudo nano /etc/hosts

Add the private backend IPs of all your servers. It should look like this:

10.0.0.11 ceph-server1

10.0.0.12 ceph-server2

10.0.0.13 ceph-server3

Verify that the servers can ping each other via hostname on the private network:

ping -c 3 ceph-server2

Step 2: Install Docker and Cephadm on Server 1

Modern Ceph deployments use cephadm, which deploys Ceph components as containerized services for easier upgrades and management. We will bootstrap the cluster from ceph-server1.

Log into ceph-server1 and install the prerequisites:

sudo apt update && sudo apt upgrade -y

sudo apt install curl docker.io python3 -y

Next, fetch the cephadm standalone script and make it executable:

curl --silent --remote-name --location https://github.com/ceph/ceph/raw/quincy/src/cephadm/cephadm

chmod +x cephadm

sudo ./cephadm add-repo --release quincy

sudo apt update

sudo apt install cephadm ceph-common -y

Step 3: Bootstrap the Ceph Cluster

Now, we initialize the cluster on ceph-server1. You must specify the private IP address of ceph-server1 so Ceph knows which network to use for cluster communication.

sudo cephadm bootstrap --mon-ip 10.0.0.11

This process takes a few minutes. It deploys the initial Monitor (MON) and Manager (MGR) daemons. When it finishes, the terminal will output a success message containing the URL for the Ceph Dashboard and your auto-generated admin password. Save these credentials!

Step 4: Copy SSH Keys and Add Servers 2 & 3

For cephadm to deploy services to ceph-server2 and ceph-server3, it needs passwordless SSH access.

During the bootstrap, cephadm generated a public SSH key. View it and copy it to the other servers:

sudo cat /etc/ceph/ceph.pub

(Copy the output, then log into server 2 and server 3, and add this key to their /root/.ssh/authorized_keys files).

Alternatively, from ceph-server1, you can use ssh-copy-id:

sudo ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph-server2

sudo ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph-server3

Now, tell the cluster to adopt the new servers:

sudo ceph orch host add ceph-server2 10.0.0.12

sudo ceph orch host add ceph-server3 10.0.0.13

Verify the servers are attached:

sudo ceph orch host ls

Step 5: Provision the NVMe Drives as OSDs

Your cluster is online, but it has no storage capacity. We need to tell Ceph to use the empty, raw NVMe drives on each server as Object Storage Daemons (OSDs).

First, list all available storage devices across the cluster to find the exact drive paths:

sudo ceph orch device ls

Assuming your raw NVMe drive is /dev/nvme1n1 on all three servers, you can add them individually:

sudo ceph orch daemon add osd ceph-server1:/dev/nvme1n1

sudo ceph orch daemon add osd ceph-server2:/dev/nvme1n1

sudo ceph orch daemon add osd ceph-server3:/dev/nvme1n1

(Alternatively, you can tell Ceph to automatically consume all available, unformatted drives using sudo ceph orch apply osd --all-available-devices, but explicit assignment is safer for enterprise setups).

Step 6: Verify Cluster Health

Check the status of your new High-Availability NVMe cluster:

sudo ceph -s

You should see health: HEALTH_OK. You now have a 3-server distributed storage cluster capable of withstanding total server failures without losing a single byte of data.

The Network Bottleneck Warning

Ceph is incredibly powerful, but it generates massive amounts of backend "east-west" network traffic. Every time you write a file to the cluster, Ceph instantly copies it to the other servers over your internal network.

If you attempt this on a standard 1Gbps or 10Gbps shared cloud network, the replication delay will crush your database performance.

iDatam 100Gbps Dedicated Servers

To achieve the sub-millisecond latency required for production databases and VM hosting, deploy your cluster on iDatam's 100Gbps Dedicated Servers. We provide unmetered, non-blocking internal network fabrics so your Ceph cluster can replicate data as fast as your PCIe Gen 5 NVMe drives can write it.

iDatam Recommended Tutorials

Control Panel

How to Fix Invalid cPanel License Error?

Find out how to fix the Invalid cPanel License error with this step-by-step guide. Resolve licensing issues quickly and get your hosting control panel back on track.

Control Panel

How to Install and Use JetBackup in cPanel

Learn how to install and use JetBackup in cPanel with this step-by-step tutorial. Discover how to back up and restore accounts, files, databases, and more efficiently.

Network

Remote Desktop Can’t Connect To The Remote Computer [Solved]

Learn how to fix the Remote Desktop can't connect to the remote computer error. Discover common causes such as network problems, Windows updates, and firewall restrictions, along with step-by-step solutions to resolve the issue and restore your remote desktop connection.

Discover iDatam Dedicated Server Locations

iDatam servers are available around the world, providing diverse options for hosting websites. Each region offers unique advantages, making it easier to choose a location that best suits your specific hosting needs.